The False Contrast Cliché: A Content Strategy Fail

False contrast clichés are common in AI-generated content. LLMs have been trained on a ton of business language, and that happens to be the type of language that good writers avoid.

You'll see the false contrast cliché on LinkedIn, day in, day out.

This likely happens because people ask the LLM to "write for LinkedIn" or "create a post targeted at business leaders", so it defaults to this corporate format:

"It's not just [x], it's [y]".

This formula is used to make an impact. Hey! It's not just whatever thing you thought it was, it's this other thing that's much more important!

There's usually no real explanation or context, which is why it doesn't ring true.

Example of the False Contrast Cliché

For an example of the false contrast cliché, let's examine a recent post from the Think With Google site.

The post has multiple examples of this cliché (and several other signs of low-effort content). Here's the first one:

This is a false contrast cliché with some extra seasoning from some marketing lingo ("transform", "era", "profound").

In the next paragraph, we have a false contrast cliché with a slightly different format. The intended impact is the same:

Again, we have marketing jargon: "blueprint".

Further down in the post, here's the third example:

(I'm not even sure that one makes sense.)

Anyway, we get another attempt to make an impact further down...

...complete with a "paradigm shift".

There's a bonus ball: one more false contrast cliché in the email newsletter that promotes the post:

Look for AI slop squared

Once you know the clichés look for, you don't need an AI content detector.

The by-doing cliché is common in content generated by Gemini or ChatGPT. That doesn't appear in this example, but there are some others.

The battle to avoid vagueness

"Landscape" is a common example of a word that is inserted when the LLM doesn't know what else to say. But "landscape" has likely been prohibited by the prompt in this post, so Gemini has reached for something semantically similar:

...along with the word "shape", which is another tell-tale sign that the content is low effort ("reshape", too, which is more common).

(As I researched this post, I looked for content I could boost and link to. Every single article I found had the word "landscape" in the first or final paragraph. Ban this word from your content!)

Inconsistent brand messaging

Your content needs to align with brand messaging across channels. That's one of the ways to reinforce what LLMs say about you.

Interesting, then, that this is the first and only time I've seen Google admit that AI Mode is reducing click-through rates:

And that is inconsistent with what the Vice President of Search is saying.

I can't stress how important this is: say the same thing everywhere.

If you want LLMs to represent you accurately, reinforce the patterns they're looking for.

Use AI as an assistant, not your boss

AI-assisted workflows are the key to business survival. I'm all for making things better and more efficient.

But content like this pushes the human writer into a supporting role when they should be fully in charge.

Without someone improving LLM outputs, content slides into the mushy middle. Everything sort of... sounds fine, and makes sense, but means nothing.

There's nothing to excite a customer, or a reader, and get them to act.

And on that point, I agree with Greg Isenberg:

ai-generated content is creating a monoculture of ideas. when everyone uses the same models, we get the same outputs. original human thinking is becoming the ultimate premium. be weird. weird will sell.

Weird > beige.

The content aims to give the illusion of expertise. But expertise is hard to scale in an authentic way, and it's obvious here.

This is not about bad writing, or being a snob, or rejecting AI completely.

The "not just X, but Y" cliché signals to the reader that your brand has absolutely nothing to differentiate it from your competitors, and you aren't willing to hire an editor to check what you're saying on your own website.

Most importantly, gives the reader no reason to trust that you're even thinking about their problems.

Brands should avoid signs of AI writing

Brands can be weird, unique, distinctive, and successful. And the brands that stand out are the brands that will signal authenticity.

The ones that don't risk "AI blindness", where customers glaze over or lose faith in what you're saying.

I'm not the only person who thinks this way.

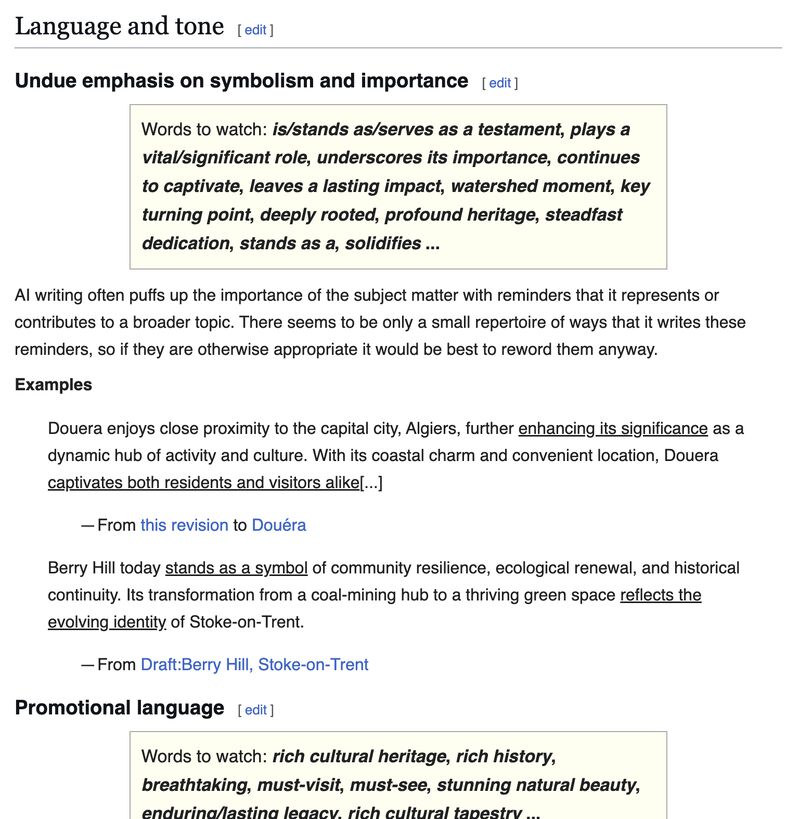

About a month ago, I posted about Wikipedia's excellent page on detecting AI-generated content:

It's good advice, and I encourage you to read through the examples, which are comprehensive.

There are good reasons for Wikipedia prohibiting AI clichés:

- Generic phrasing and clichés in AI-generated content signal that little research was done when the content was created

- Citations and statistics are often wrong in this type of content, and that's a huge problem for businesses and consumers alike

- If allowed to spread, Wikipedia would lose its authority as a trusted resource

You should be as concerned about being a trusted resource as Wikipedia is.

And let me reiterate that this is not Wikipedia editors being stalwarts, or being needlessly picky about what they will and won't approve.

Accuracy is known to be one of the issues with unchecked AI-generated content, and this study goes into the other arguments against it:

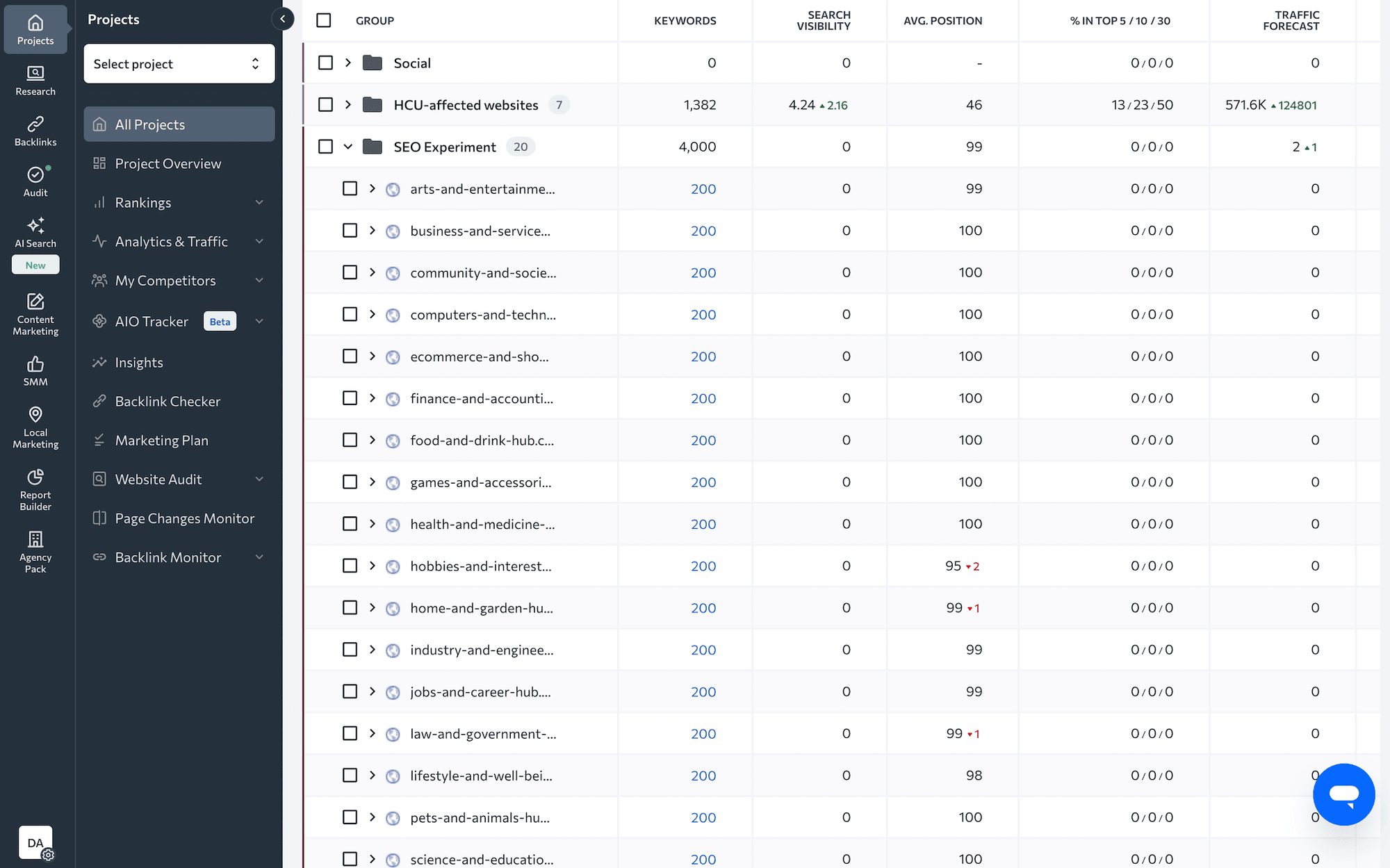

This is not about whether AI-generated content will rank or not

It likely will rank. Maybe not forever...

...but it may rank in the short term.

Ultimately, rankings don't mean a lot if nobody's buying anything. We should be more concerned about the long-term impact on trust.

When using AI assistance for content, it has to be the bread around the burger, not the entire meal:

- Use AI to brainstorm, check your work, review it, improve, and make corrections

- Use tools that take the pain out of time-consuming tasks that slow down writing

- Use AI to write, but make it better; add your own voice as you scoop out the slop

How to detect AI slop: the human framework

Here's the framework I use to review content for signs of effort. This will catch content that is too reliant on AI, but applies more generally to low-quality submissions as well:

- Does the content have unnatural repeated phrasing?

- Does the content back up claims with proof, data, or expertise?

- Does the writer show that they tested their own process or learned lessons?

- Is the writer's tone, style, or formatting changing from one section to the next?

- Does the content give me something new, actionable, or unique?

Have you signed up for my Substack? I'm sending weekly roundups about changes that content marketers need to know about. Get involved here - it's free:

Comments ()