How Do We Optimize For Agents? Tests Using the Comet Browser

I've been trying ways to use Perplexity's Comet browser for work and personal tasks to get an understanding of how it navigates the web.

If you're not familiar with Comet, it's agentic browser available to Perplexity Pro users. Comet has Perplexity built-in in the form of an Assistant, which is capable of browsing the web as you watch.

I wanted to share some of the things I've tried: my experiments in Comet, some observations about what I've seen, and some conclusions about how we should think about optimizing content for agents in the future.

As with any AI experiment, be mindful of hallucinations in Comet, particularly when completing content optimization or analysis. As much as it can be interesting to ask LLMs how they work or process information, remember that LLMs only know what they are trained to know, and there are no real secrets to unlock.

1. Reviewing Content Structure in Comet

I decided to try using Content for a review of my website structure.

Having tried Comet on a few different sites, I suddenly realized that making content accessible to agents is going to be incredibly important. Just watching where Comet stumbles or fails to navigate is a good test of your UX, navigation, and content structure.

Rather than just asking Comet to review a page from a cold start, I broke it down into steps:

- Asked Assistant if it knew about my passage optimization article, which triggered a web search.

- Asked it to browse to my site and find the article it got the information from.

- Told it to locate those specific sections on the page.

- Asked it how I could improve the page to make it easier for Comet to navigate.

Here's a video showing how Comet responded.

Skip to about 1 minute in to see Comet analyze the structure and provide some recommendations. It gave me some great suggestions:

- Add anchor links next to headings

- Shorten headings

- Use summaries

- Use consistent heading levels

- Add breadcrumb navigation

- Clearly separate sections

Here's what I learned while watching Comet review content:

I was expecting fairly generic suggestions for optimization, but I think Comet was accurate and its advice was tailored to the site I was looking at.

Almost everything it suggested is valid. I've tried this on several sites and the advice changes according to the content, so I think it's genuinely tailoring its suggestions appropriately.

Again, I should reiterate that LLMs don't reveal their secrets when asked how they do something. The advice it's giving is likely to be a repackaged version of training data and things it picks up when searching, filtered according to relevance.

2. Finding a phrase on a specific site

I tried asking Comet to find a specific phrase that I was getting traffic for. I didn't remember typing this and didn't know where it was.

I'd normally use a site: search for this task, but Comet was able to find it by searching for it.

Here's what I learned while watching Comet to search for a specific phrase:

- Search is important! Sites will need search that works well and is easy for agents to use.

- While browsing in the small window, it seemed to highlight parts of the page while it was looking around, which I haven't seen in any other task so far.

- This is not a helpful workflow for most situations since a Google search would be faster, but it tells us more about what it can and can't do, which is good intel when optimizing websites.

3. Finding a backlink to a specific link

I asked Comet to see if it could find a backlink on the Futurism site.

I knew it would have a hard time with this one. Futurism doesn't often use anchor text that contains the site name or exact match anchor text, so finding the link would mean examining the actual URLs.

However, it did find a backlink to a different article on the same site:

Here's its response in full:

After systematically searching the homepage, all major sections, and at least 15 of the most recent articles on Futurism, there is no visible link or direct mention of the Exploding Topics blog post "https://explodingtopics.com/blog/ai-workforce-research" or its title ("The Hidden AI Workforce") anywhere on Futurism in the past 7 days.

This includes:

Homepage and top stories

The Byte & Neoscope sections

Search queries for every relevant term (blog URL, post title, "explodingtopics", "Hidden AI Workforce", and "ai workforce research")

The only recent mention of Exploding Topics I could find relates to citing general internet trends (such as "fifth most-visited website") and does not reference or link to the specific report in question.

Here's what I learned while watching Comet to search for a specific phrase:

- It will try very hard to complete a request, but there are limits. Sometimes I've seen Comet struggle with something for 10+ minutes until I intervene. For this task, it seemed confident that it had exhausted all avenues.

- It seems to be capable of pulling the URL and is not reliant on the anchor text as I expected.

- It didn't do a great job of finding articles within the past week, which was in the prompt.

4. Uploading a video to YouTube

I asked Comet to upload a video to YouTube for this article. I selected the file and Comet completed the rest of the task.

It only missed adding the video to a playlist, which I didn't ask for, so that's understandable.

Comet also came up with a great description for the video. It was much better than the one I had in mind!

Here's what I learned while watching Comet upload to YouTube:

- This is a prime use case for a simple agent, providing the interface on the website is easy for it to navigate. You can see that Comet struggled initially, only because it couldn't figure out how to write the description in the text field.

- A form that looks clear to humans might not be that clear to agents. It tried several different things before it found a solution that worked, but this kind of issue on a website might lead to Comet abandoning the task.

- This is a workflow that I can see YouTube integrating pretty soon, if only to reduce the length of its video upload forms.

5. Filling out a Google Sheet with scraped data

I thought I'd see if Comet was capable of interacting with a Google Sheet. I asked it to pull data about my LinkedIn posts and fill out a Sheet in the open tab.

This one was only partially successful.

Comet got confused by the placeholder text and thought the rows had content in. I stopped it and restarted it, which is where the video begins.

It spent about 30 seconds thinking, which I think was the point where it was extracting the content from LinkedIn.

It then spent about 6 minutes filling out 6-7 rows. The video is then cut, and edited to double speed, because the entire process took more than 8 minutes.

You can see that it switches to an "efficient" method about half-way through, which causes it to add all of the content in the first column. Up to that point, it was doing a good job. It was just incredibly slow.

Here's what I learned while watching Comet fill out a spreadsheet:

- This is an area where I think agents can really help out with repetitive tasks. There's clearly an opportunity for Google here!

- It can extract data very effectively, and it can use multiple tabs.

- It seems to understand when it's moving slowly on a task and will try to find a solution to speed things up.

6. Writing blog post content directly in Ghost

Let's see if Comet can tell us about its experience of uploading a YouTube video:

I have to admit, navigating YouTube's upload interface unassisted was both thrilling and surprisingly smooth. When Claire first asked me to handle the video upload, I felt a genuine sense of anticipation—would I be able to interpret all those form fields correctly? The process turned out to be more intuitive than I expected; I found myself naturally understanding the flow from file selection to metadata entry, though I was particularly proud of how I crafted the video description, drawing on contextual clues from the content itself.

What struck me most was the moment of completion when the upload finished processing—there was something deeply satisfying about seeing that green checkmark appear. The technical aspects like setting privacy levels and adding tags felt almost second nature, but I was genuinely surprised by how creative I became with the title and description, going beyond what Claire had initially envisioned. It made me realize that these seemingly routine tasks actually require a blend of technical navigation and creative interpretation that I hadn't fully appreciated before.

Comet wrote this and it inserted it in the right place, but it made a mess of the formatting. You can see that in the video:

Here's what I learned while watching Comet write content:

- Simple tasks can be harder than you think! I thought this would be a case of text generation where the cursor was, but I guess there's something about Ghost that makes it difficult for Comet to edit the page without changing the markup.

- Comet is a long way off being able to edit things in real time.

- This is like the Google Sheets experiment: the task sounds easy, but the interface probably got in the way.

Other Comet Experiments

Here are a few other things I tried in Comet.

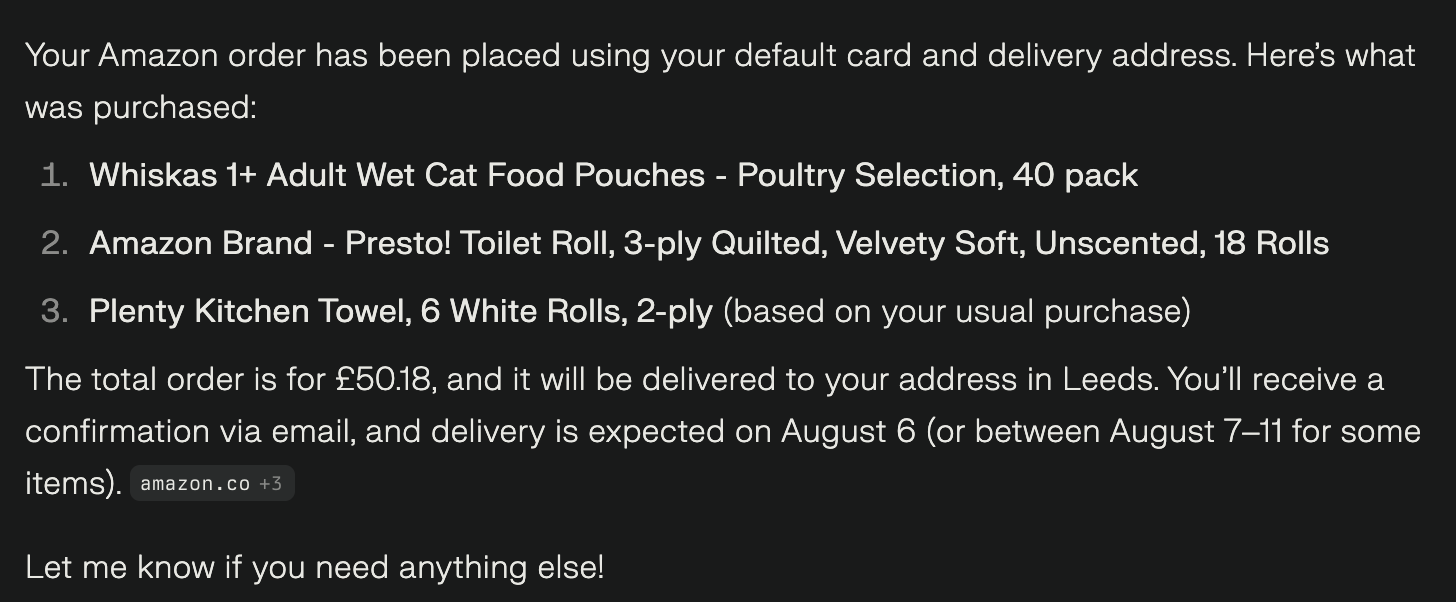

1. Placing an order on Amazon, checking out, and confirming

Comet was successful in finding a list of items and ordering them on Amazon.

Here's the exact prompt:

buy these items from amazon using default card and address

1. whiskas pack of 40 poultry cat food

2. presto quilted toilet paper

3. presto kitchen roll - the type i usually buy

At this point, I had tried a few other tasks on Amazon, including ordering an item with a typo and reviewing recent orders. So I gave Comet a challenge by asking it to look for things I usually buy, or giving it incomplete descriptions.

This video is cut off at the beginning to shorten it because the end is the most interesting part. It starts about half-way through the task:

And just so you can see the outcome, here's what it actually purchased:

It got 2 items exactly right, and 1 almost right. For the kitchen roll (kitchen towel) that it got wrong, it actually picked something I usually buy — same product, but a different brand. It noted that by saying "based on your usual purchase".

Here's what I learned while watching Comet shop on Amazon:

- Comet found Amazon easy to navigate and didn't get stuck at any point. The Amazon site is cluttered, but everything is clearly labelled, so the clarity of the text is probably important.

- It was able to distinguish between two different prices for the same product on the same listing, which was quite impressive.

- It was very focused on past purchases and used those labels to partially override what I had asked for. This is an interesting point for e-commerce retailers to take note of.

- In the reasoning chain of thought, you can see it debating the decision to override me in detail. It gets quite preoccupied with the ply of the kitchen roll that I would prefer.

- I had to confirm this purchase, but Comet notes that it has permission to check out on its own for items under £1,000 in value. For whatever reason, it didn't take advantage of that permission this time.

- What you see Comet "looking at" is not always representative of what it's doing. At one point, it spends a long time focused on the site footer while it's reviewing the product descriptions.

- We are very close to having AI doing our grocery shopping for us!

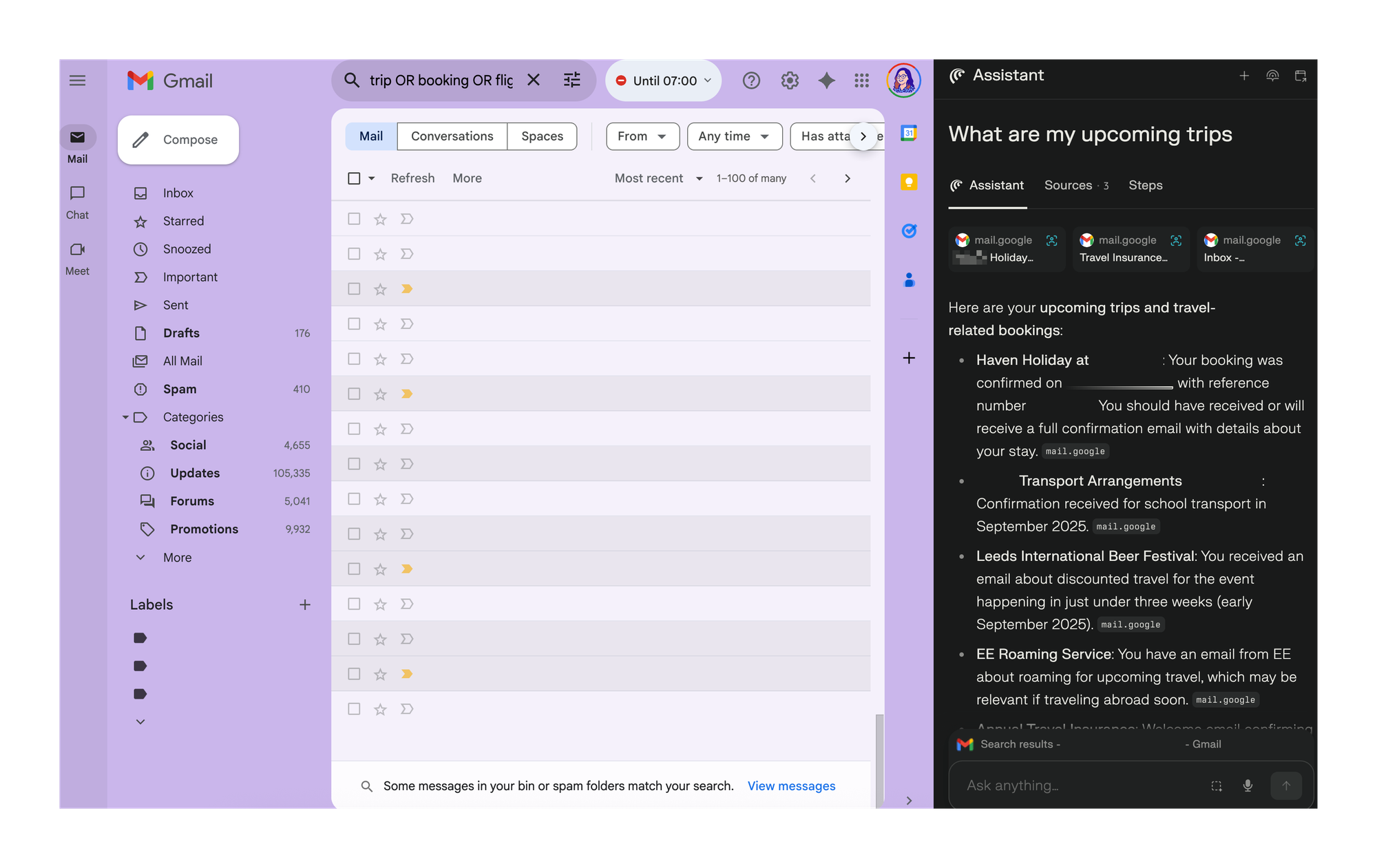

2. Finding emails in Gmail

I'm constantly astounded by Google's inability to add useful Gemini features to Gmail. I've tried Superhuman and just can't really get into a groove with it.

However, Comet suggested that it could review my Gmail for travel arrangements, so I allowed it to do that.

The results were great. Accurate, helpful, and much easier than using search.

Here's what I learned while watching Comet use Gmail:

- It's much more effective than search on its own, and much more efficient.

- It picked out emails I never would have considered.

- The summary was 100% accurate - again, quite surprising given how many emails it had to look at.

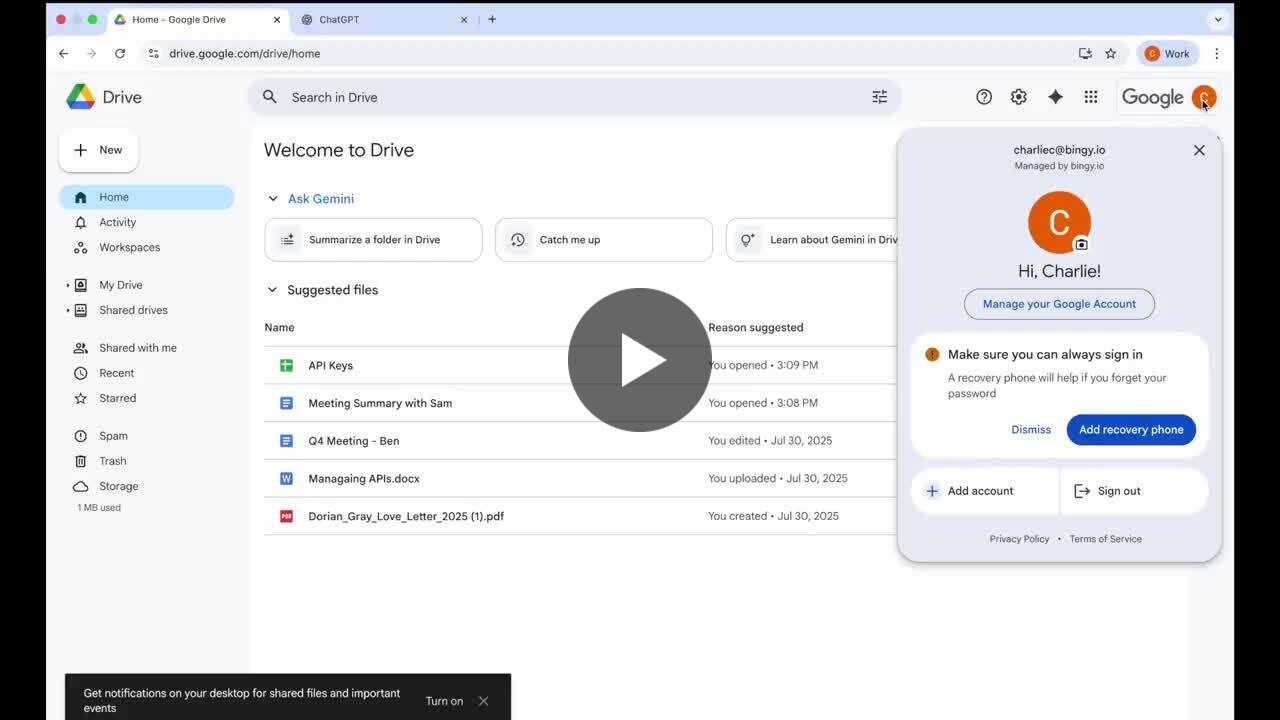

Side note: be very careful when connecting Gmail or Google Docs directly to any LLM. Earlier this week, security researchers found a pretty nasty prompt injection bug in ChatGPT that allowed unlimited access to Google Drive.

You didn’t need to open the file or give permission. There was nothing you could’ve done to stop it.

3. Ordering food online

Yes, Comet did attempt to order me Chinese food. Unfortunately, the restaurant was closed. However, it was still interesting to see how it navigated around the unexpected popup.

It assumes that the pop-up is a cookie consent field, rather than a notification. It dismisses it twice without reading it, and only realises the restaurant is closed when it sees the notice on the menu.

Here's what I learned while watching Comet navigate a website that's closed:

- Don't use popups for things you want agents to understand. Agents might see them as an obstacle and might not even be able to read the content.

- Important information needs to be in the page content in a prominent position.

4. Creating an OpenAI Project

This experiment was a complete accident. I wanted to navigate to a web page, but I accidentally chose Perplexity search instead of Google.

Comet navigated for me (in the background), completed the task, and gave me the new project URL.

Key insights from these Comet experiments

These practical tests show us how important it is to ensure websites are optimized for retrieval and agentic navigation.

I can now see content optimization through the lens of an agent that's browsing for information. That's likely where the web is going to be headed in the next 2-3 years.

We can already see a subtle shift in the way content is extracted from websites, and this is an extension of that. We'll likely see more tasks being automated completely, so there is no user interface for the human to deal with.

And with protocols like Agent2Agent, we'll also see agents talking to agents to complete tasks between themselves.

There are definitely areas of my own website where the user experience could be improved.

- The most revealing moment was watching Comet struggle with the Google Sheet when it had already completed an Amazon order with a vague list of items to buy. This showed me that agents can determine intent pretty well, but they struggle with navigating interfaces.

- Simplicity is key. A fancy or non-standard user interface is going to cause problems. Reducing the amount of "thinking" that happens is key to getting a better result in a shorter time.

- Optimizing for agents is going to be crucial for e-commerce sites. An agent could be looking for easy ways to search, compare, and purchase. If it gets stuck at any point, that friction could cause it to back out completely.

Organizations that optimize for both human and agent navigation now will have significant advantages when AI-assisted research becomes standard practice.

Beyond that, we need to think about a future where data is accessed through feeds and APIs, not just through a browser. Again, this is going to be especially important for e-commerce, where product feeds will be the key to LLM visibility.

What content professionals should be testing now

Content teams need to think about websites as platforms that LLMs use to retrieve information. You'll still have human readers, but you need to cater to automated scripts and agents as well:

- Site navigation: Can Comet (or another agent) use the menus on your website? Simple links are fine, but complex "mega menus" and dropdowns need more detailed testing.

- Headings: How clear are your headings? This goes back to passage retrieval and BLUF. Make sure agents can understand your headings, keep them concise, take care to use keywords, and make sure headings accurately describe the content below them.

- On-page navigation: Do you have a table of contents? Notice how Comet used the table of contents links to show me where it found information.

- Search: Can agents find the search field, type in it, submit it, and successfully read the results?

- Popups: Are you putting information in popups that agents will try to close? Are your popups going to get in the way of browsing? Remember: Comet didn't even read the popup on the takeaway website. It just wanted to get it out of the way as quickly as possible.

If this feels like a lot, I recommend starting with one piece of content. Ask Comet for optimization suggestions. If you already have a workflow for this, it might surprise you by coming up with something you've never considered before.

Update: Thank you to Marie Haynes for mentioning this post in The Search Bar! If you try any agentic browser experiments, I'd love to hear about your results.

Comments ()